| Online proceedings with links to ACM Digital Library are available here |

Information for presentation: |

| - Presentation time (including Q & A): 25 minitues for full papers and 15 minutes for short papers. |

| - Input: VGA |

| - Screen: 4:3 aspect ratio |

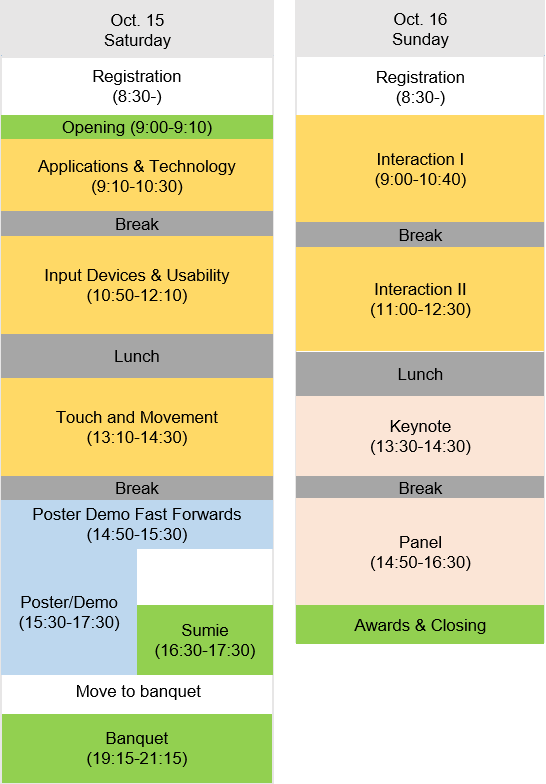

| Applications & Technology (Oct.15, 9:10-10:30) show/hide description

Chair: Daisuke Iwai (Osaka University) |

| Thumbs-Up: 3D Spatial Thumb-Reachable Space for One-Handed Thumb Interaction on Smartphones |

| Khalad Hasan, Junhyeok Kim, David Ahlström, Pourang Irani |

| Moving Ahead with Peephole Pointing: Modelling Object Selection with Head-Worn Display Field of View Limitations |

| Barrett Ens, David Ahlström, Pourang Irani |

| Improving Interaction in HMD-Based Vehicle Simulators through Real Time Object Reconstruction |

| Michael Bottone, Kyle Johnsen |

| Exploring Immersive Interfaces for Well Placement Optimization in Reservoir Models |

| Roberta Cabral Mota, Stephen Cartwright, Ehud Sharlin, Mario Costa Sousa |

| Input Device & Usability (Oct.15, 10:50-12:10) show/hide description

Chair: Wolfgang Stuerzlinger (Simon Fraser University) |

| A Metric for Short-Term Hand Comfort and Discomfort: Exploring Hand Posture Evaluation |

| Jonas Mayer, Nicholas Katzakis |

| Improving Gestural Interaction With Augmented Cursors |

| Ashley Dover, G Michael Poor, Darren Guinness, Alvin Jude |

| Desktop Orbital Camera Motions using Rotational Head Movements |

| Thibaut Jacob, Gilles Bailly, Eric Lecolinet, Géry Casiez, Marc Teyssier |

| On Your Feet! Enhancing Vection in Leaning-Based Interfaces through Multisensory Stimuli |

| Ernst Kruijff, Alexander Marquardt, Christina Trepkowski, Robert Lindeman, Andre Hinkenjann, Jens Maiero, Bernhard E. Riecke |

| Touch and Movement (Oct.15, 13:10-14:30) show/hide description

Chair: Ernst Kruijff (Bonn-Rhein-Sieg University of Applied Sciences) |

| A Non-grounded and Encountered-type Haptic Display Using a Drone |

| Kotaro Yamaguchi, Ginga Kato, Yoshihiro Kuroda, Kiyoshi Kiyokawa, Haruo Takemura |

| Enhancement of Motion Sensation by Pulling Clothes |

| Erika Oishi, Masahiro Koge, Sugarragchaa Khurelbaatar, Hiroyuki Kajimoto |

| Impact of Motorized Projection Guidance on Spatial Memory |

| Hind Gacem, Gilles Bailly, James Eagan, Eric Lecolinet |

| Inducing Body-Transfer Illusions in VR by Providing Brief Phases of Visual-Tactile Stimulation |

| Oscar Ariza, Jann Freiwald, Nadine Laage, Michaela Feist, Mariam Salloum, Gerd Bruder, Frank Steinicke |

| Interaction I (Oct.16, 9:00-10:40) show/hide description

Chair: Barrett Ens (University of Manitoba) |

| Interacting with Maps on Optical Head-Mounted Displays |

| David Rudi, Ioannis Giannopoulos, Peter Kiefer, Christian Peier, Martin Raubal |

| Touching the Sphere: Leveraging Joint-Centered Kinespheres for Spatial User Interaction |

| Paul Lubos, Gerd Bruder, Oscar Ariza, Frank Steinicke |

| Optimising Free Hand Selection in Large Displays by Adapting to User's Physical Movements |

| Xiaolong Lou, Andol X. Li, Ren Peng, Preben Hansen |

| Locomotion in Virtual Reality for Individuals with Autism Spectrum Disorder |

| Evren Bozgeyikli, Andrew Raij, Srinivas Katkoori, Rajiv Dubey |

| Interaction II (Oct.16,11:00-12:30) show/hide description

Chair: Kyle Johnsen (University of Georgia) |

| SHIFT-Sliding and DEPTH-POP for 3D Positioning |

| Junwei Sun, Wolfgang Stuerzlinger, Dmitri Shuralyov |

| Preference Between Allocentric and Egocentric 3D Manipulation in a Locally Coupled Configuration |

| Paul Issartel, Lonni Besançon, Florimond Guéniat, Tobias Isenberg, Mehdi Ammi |

| Providing Assistance for Orienting 3D Objects Using Monocular Eyewear |

| Mengu Sukan, Carmine Elvezio, Steven Feiner, Barbara Tversky |

| Combining Ring Input with Hand Tracking for Precise, Natural Interaction with Spatial Analytic Interfaces |

| Barrett Ens, Ahmad Byagowi, Teng Han, Juan David Hincapié-Ramos, Pourang Irani |

| Demo (Oct.15, 14:50-(Fast Forwards) 15:30-17:30(Demo)) |

| Sharpen Your Carving Skills in Mixed Reality Space |

| Maho Kawagoe, Mai Otsuki, Fumihisa Shibata, Asako Kimura |

| Stickie: Mobile Device Supported Spatial Collaborations |

| Jaskirat S. Randhawa |

| Shift-Sliding and Depth-Pop for 3D Positioning |

| Junwei Sun, Wolfgang Stuerzlinger, Dmitri Shuralyov |

| Developing Interoperable Experiences with OpenUIX |

| Mikel Salazar, Carlos Laorden |

| TickTockRay Demo: Smartwatch Raycasting for Mobile HMDs |

| Daniel Kharlamov, Krzysztof Pietroszek, Liudmila Tahai |

| Poster (Oct.15, 14:50-(Fast Forwards) 15:30-17:30(Poster)) |

| Mushi: A Generative Art Canvas for Kinect Based Tracking |

| Jennifer Weiler, Sudarshan Seshasayee |

| AR Tabletop Interface Using an Optical See-Through HMD |

| Nozomi Sugiura, Takashi Komuro |

| Coexistent Space: Collaborative Interaction in Shared 3D Space |

| Ji-Yong Lee, Joung-Huem Kwon, Sang-Hun Nam, Joong-Jae Lee, Bum-Jae You |

| Development of a Toolkit for Creating Kinetic Garments Based on Smart Hair Technology |

| Mage Xue, Masaru Ohkubo, Miki Yamamura, Hiroko Uchiyama, Takuya Nojima, Yael Friedman |

| Large Scale Interactive AR Display Based on a Projector-Camera System |

| Chun Xie, Yoshinari Kameda, Kenji Suzuki, Itaru Kitahara |

| TickTockRay: Smartwatch Raycasting for Mobile HMDs |

| Krzysztof Pietroszek, Daniel Kharlamov |

| 3D Camera Pose History Visualization |

| Mayra Donaji Barrera Machuca, Wolfgang Stuerzlinger |

| Social Spatial Mashup for Place and Object - based Information Sharing |

| Choonsung Shin, Youngmin Kim, Jisoo Hong, Sunghee Hong, Hoonjong Kang |

| Real-time Sign Language Recognition with Guided Deep Convolutional Neural Networks |

| Zhengzhe Liu, Fuyang Huang, Wai Lan Tang, Felix Yim Binh Sze, Jing Qin, Xiaogang Wang, Qiang Xu |

| Window-Shaping: 3D Design Ideation in Mixed Reality |

| Ke Huo, Vinayak, Karthik Ramani |

| KnowWhat: Mid Field Sensemaking for the Visually Impaired |

| Sujeath Pareddy, Abhay Agarwal, Manohar Swaminathan |

| Katsukazan: An Intuitive iOS App for Informing People About Volcanic Activity in Japan |

| Paul Haimes, Tetsuaki Baba |

| Empirical Method for Detecting Pointing Gestures in Recorded Lectures |

| Xiaojie Zha, Marie-luce Bourguet |

| Arm-Hidden Private Area on an Interactive Tabletop System |

| Kai Li, Asako Kimura, Fumihisa Shibata |

| AnyOrbit: Fluid 6DOF spatial navigation of virtual environments using orbital motion |

| Benjamin Outram, Yun Suen Pai, Kevin Fan, Kouta Minamizawa, Kai Kunze |

| KnowHow: Contextual Audio-Assistance for the Visually Impaired in Performing Everyday Tasks |

| Abhay Agarwal, Sujeath Pareddy, Swaminathan Manohar |

| Effect of using Walk-In-Place Interface for Panoramic Video Play in VR |

| Azeem Syed Muhammad, Sang Chul Ahn, Jae-In Hwang |

| Using Area Learning in Spatially-Aware Ubiquitous Environments |

| Edwin Chan, Yuxi Wang, Teddy Seyed, Frank Maurer |

| MocaBit 2.0: A Gamified System to Examine Behavioral Patterns through Granger Causality |

| Sanghyun Yoo, Sudarshan Seshasayee |

| Fast and Accurate 3D Selection using Proxy with Spatial Relationship for Immersive Virtual Environments |

| Jun Lee, Ji-Hyung Park, JuYoung Oh, JoongHo Leek |

| Haptic Exploration of Remote Environments with Gesture-based Collaborative Guidance |

| Seokyeol Kim, Jinah Park |

| Subliminal Reorientation and Repositioning in Virtual Reality During Eye Blinks |

| Eike Langbehn, Gerd Bruder, Frank Steinicke |

| Multimodal Embodied Interface for Levitation and Navigation in 3D Space |

| Monica Perusquia-Hernandez, Tiago Martins, Takahisa Enomoto, Mai Otsuki, Hiroo Iwata, Kenji Suzuki |

| Acquario: A Tangible Spatially-Aware Tool for Information Interaction and Visualization |

| Sydney Pratte, Teddy Seyed, Frank Maurer |

| Grasp, Grab or Pinch? Identifying User Preference for In-Air Gestural Manipulation |

| Alvin Jude, G. Michael Poor, Darren Guinness |

| Biometric Authentication Using the Motion of a Hand |

| Satoru Imura, Hiroshi Hosobe |